Agencies whose clients are cited by AI are becoming the new default vendors.

AI answer systems do not select results based on rankings alone. They choose sources they can interpret clearly, verify consistently, and reuse safely in generated responses. When client sites lack this structural clarity, they are excluded even when they dominate traditional search results.

AI systems evaluate meaning across an entire site, not just individual pages. Most websites were built for human navigation, where context is assembled gradually. Models must interpret pages in isolation, without shared assumptions, and favor content that communicates expertise explicitly and consistently.n interpret clearly, verify consistently, and reuse safely in generated responses. When client sites lack this structural clarity, they are excluded even when they dominate traditional search results.

- Clients can disappear from AI answers while still ranking on page one

- Competitors become the cited experts and win the recommendation layer

- Performance appears to decline despite strong SEO metrics

- Renewal risk increases as clients question results they cannot see

- Long-term account value erodes as visibility shifts to AI interfaces

This shift is already affecting agencies whose clients depend on traditional search engines for new business.

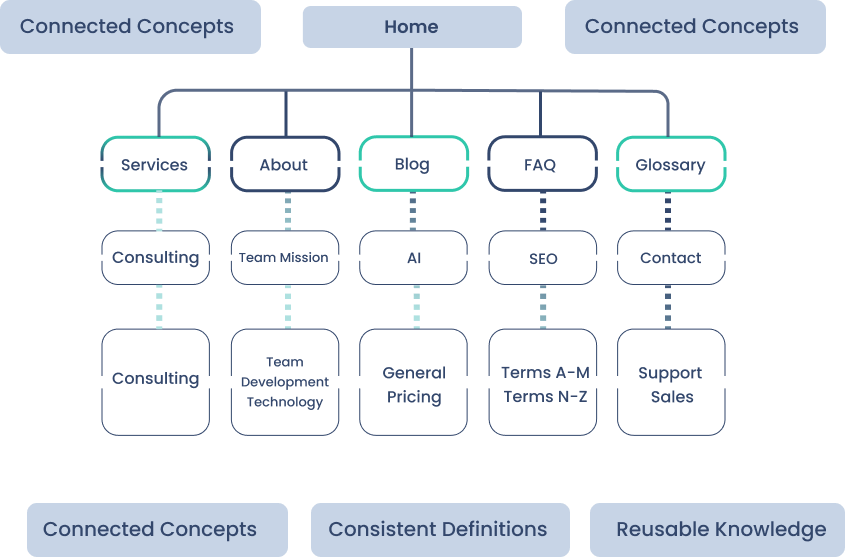

SearchShifter™ addresses this structural gap by creating a coherent knowledge framework across the site. It makes concepts explicit, terminology consistent, relationships clear, and signals machine-readable, enabling AI systems to recognize, trust, and reuse the organization as a source.

The Shift We Observed

Source: Thrivx.ai

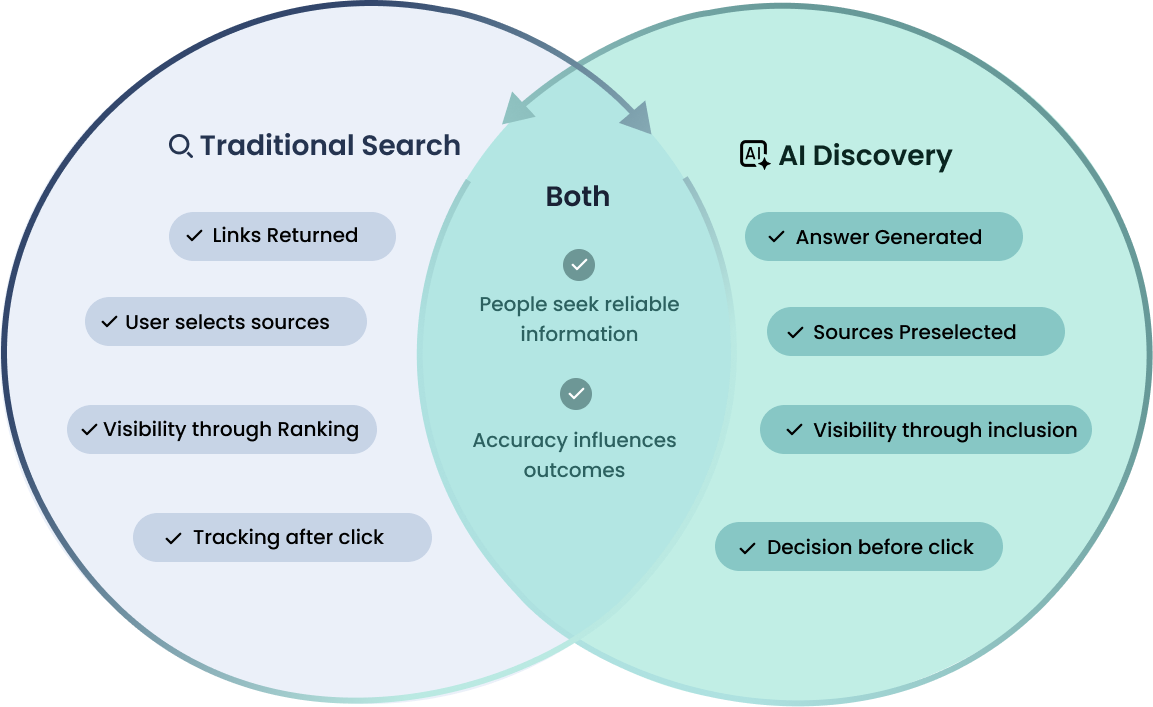

For years, search meant returning a list of links and letting users decide which sources to explore. If a page ranked well, it had an opportunity to be seen, evaluated, and chosen.

Organizations began noticing that accurate pages were sometimes absent from AI responses or described inconsistently. AI-driven discovery changes that sequence. Instead of presenting options, these systems generate a direct answer and preselect the sources used to create it. Visibility depends on whether a site is included in that answer, not simply whether it ranks. This was not a ranking issue. It reflected how large language models process information, resolve ambiguity, and select material that can be used safely within an answer.

The goal for users has not changed. People still seek reliable information, and accuracy continues to influence outcomes. What has changed is when and how sources are evaluated. In traditional search, selection happens after the click. In AI discovery, it often happens before the user ever visits a website.

This shift has significant implications for organizations and agencies. A site can rank highly yet still be absent from answers if its content cannot be interpreted confidently. When sources are preselected, exclusion means the opportunity to influence the decision may never occur.

For teams managing multiple sites, the impact compounds. Visibility is no longer determined solely by position in results but by whether content can be safely incorporated into generated responses.

Why Existing Approaches Were Not Designed for AI Discovery

Fragmented Website vs. Coherent Knowledge Structure

Source: Thrivx.ai

Traditional optimization methods were developed for search engines that return lists of links. Their purpose is to help pages be discovered, ranked, and visited. They were not designed to ensure that information can be interpreted and reused inside generated answers.

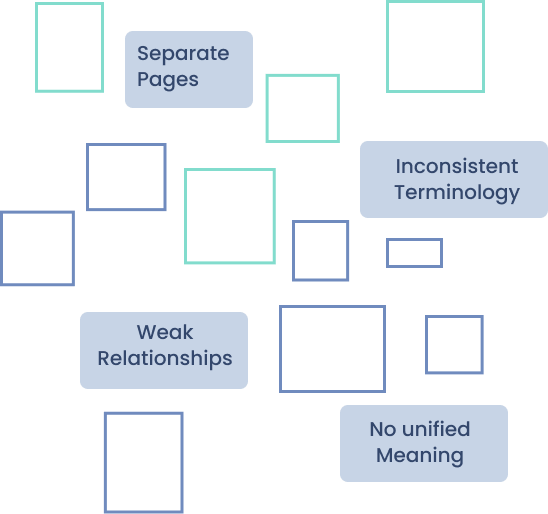

Most websites are organized as collections of individual pages targeting specific topics or keywords. While effective for search indexing, this structure can fragment meaning across a site. AI systems evaluate whether information forms a coherent body of knowledge, not just whether individual pages are relevant.

Common practices such as inconsistent terminology, implicit assumptions, and disconnected explanations can introduce ambiguity even when the content itself is accurate. When uncertainty exists, AI systems tend to favor sources that express meaning explicitly and consistently.

These limitations are structural rather than tactical. They arise from the difference between designing content for human navigation and designing information that can be interpreted without context. As a result, many well-optimized sites remain difficult for AI systems to use as authoritative sources.

Technical enhancements such as schema markup and structured data can clarify page types, but they do not ensure alignment of definitions, terminology, or relationships across the entire site. A site may therefore appear well optimized yet still be difficult for AI systems to incorporate reliably into an answer.

The Insight Behind SearchShifter™

SearchShifter™ originated from a central observation: websites are written for human readers but increasingly evaluated by systems that do not share human context. Information that is clear to a person navigating a site may remain ambiguous to a model processing content in isolation.

This revealed a fundamental mismatch between how websites are traditionally constructed and how AI systems process information. Effective representation in generated answers requires not only accurate content but also a coherent structure that makes meaning explicit without relying on navigation, prior knowledge, or implicit assumptions.

Large language models assemble answers by combining fragments from multiple sources. To do this reliably, they favor material that expresses meaning explicitly, uses consistent terminology, and maintains stable relationships between concepts across pages. When those conditions are not present, even authoritative content may be underused or represented inconsistently.

SearchShifter™ was developed as a practical response to this mismatch. Rather than replacing existing content or optimization strategies, it focuses on making site meaning consistent, connected, and interpretable at the level required for reliable AI reuse. The objective is reliable representation, not merely increased visibility.

- Agencies are increasingly responsible not only for how client websites rank, but also for how those clients are represented in AI-generated answers. When potential customers request recommendations, comparisons, or explanations, the information included in those responses can shape perception before a user ever visits a site. If a client’s content cannot be interpreted clearly, it may be omitted, summarized inaccurately, or replaced by sources that communicate meaning more explicitly. This can occur even when the underlying information is accurate and the site performs well in traditional search results.

- Because agencies manage multiple sites, the impact is cumulative. Inconsistent terminology, fragmented explanations, or implicit assumptions across client content can introduce uncertainty that affects whether those clients are selected as sources. For agencies managing multiple sites, small interpretability issues repeated across a portfolio can compound into significant differences in visibility.

- Clients with clearer, more interpretable information can be favored even when competitors offer similar expertise. This shift also changes how competitive advantage is established. Agencies that ensure their clients’ expertise is consistently understood and reusable by AI systems can influence how those clients appear in recommendations, comparisons, and decision-making contexts.

- SearchShifter™ provides a scalable way to address these risks across one site or many without requiring a redesign of each property.

About Thrivx.ai

SearchShifter™ is developed by Thrivx.ai and created by Denise Wallace. Thrivx.ai is a software company focused on how websites are discovered, interpreted, and represented in AI-driven environments. Its work centers on the structural conditions that determine whether online information can be reused accurately in generated answers.

SearchShifter™ is the company’s primary product and was developed to address the mismatch between human-oriented web content and machine interpretation. The organization’s ongoing focus is ensuring that expertise published online can be conveyed reliably as answer-based interfaces become a dominant form of discovery.

Founder

SearchShifter™ was developed by Denise Wallace, founder of Thrivx.ai, whose work focuses on how large language models discover, interpret, and represent web-based information. Her research examines the conditions required for accurate reuse of online content in AI-generated responses and the structural factors that influence representation in answer-based systems.

Preparing for AI-Driven Discovery

Organizations that depend on accurate representation in AI-generated answers need their information to be interpretable, consistent, and usable by automated systems, not merely accessible online.

Download the free plugin to assess how your site performs as answer-based discovery becomes a primary way people find information.

As answer-based discovery becomes a primary gateway to information, organizations that are not interpretable risk being excluded from consideration entirely.

Early evaluation allows organizations to understand how they are currently represented before competitors define the narrative on their behalf.